With the rapid progress of remote sensing (RS) observation technologies, cross-modal RS image-sound retrieval has attracted some attention in recent years.

However, these methods perform cross-modal image-sound retrieval by leveraging high-dimensional real-valued features, which can require more storage than low-dimensional binary features (i.e., hash codes).

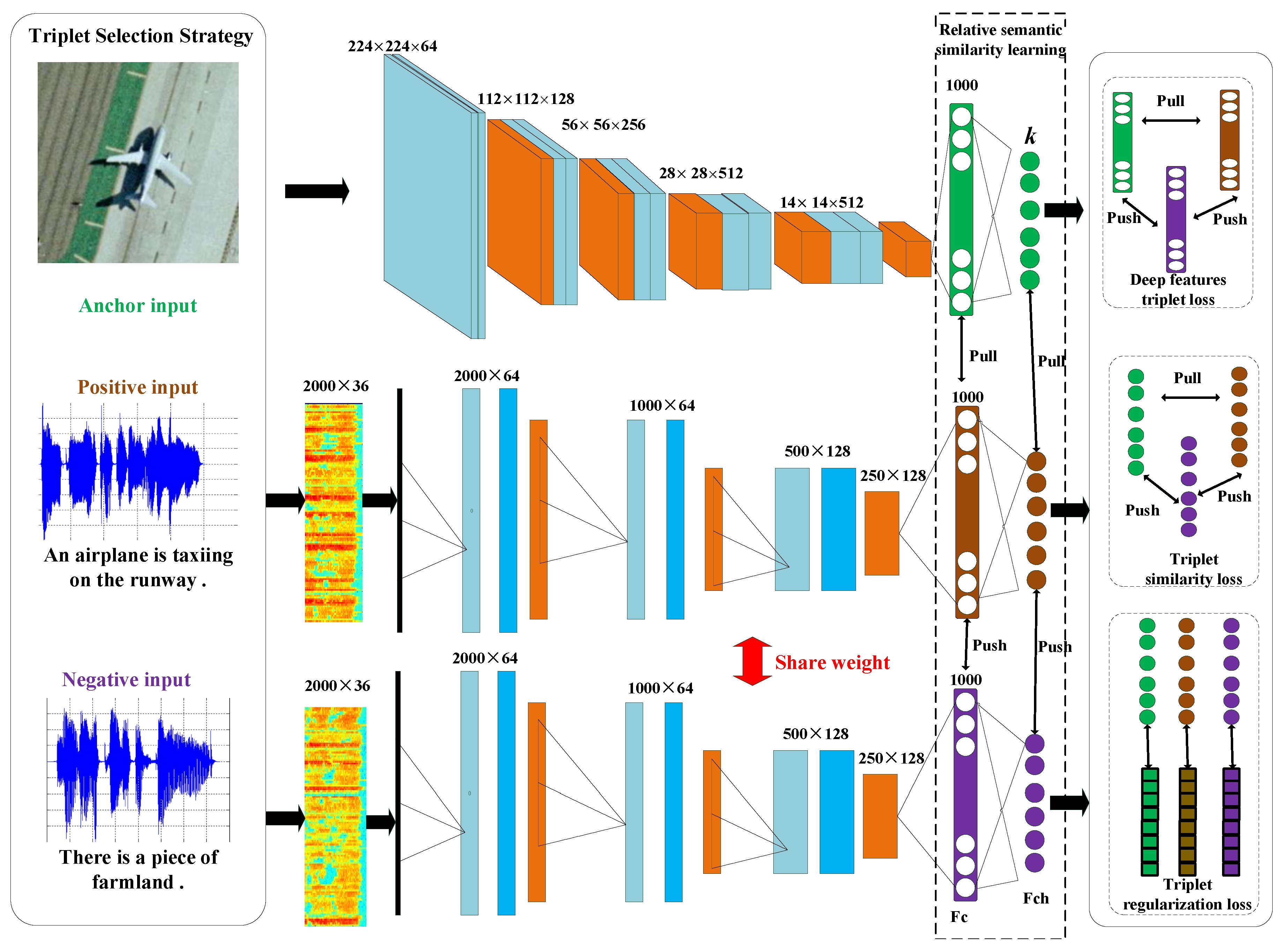

Moreover, these methods cannot directly encode relative semantic similarity relationships. To tackle these issues, we propose a new, deep, cross-modal RS image-sound hashing approach, called deep triplet-based hashing (DTBH), to integrate hash code learning and relative semantic similarity relationship learning into an end-to-end network. Specially, the proposed DTBH method designs a triplet selection strategy to select effective triplets.

Moreover, in order to encode relative semantic similarity relationships, we propose the objective function, which makes sure that that the anchor images are more similar to the positive sounds than the negative sounds.

In addition, a triplet regularized loss term leverages approximate /1-norm of hash-like codes and hash codes and can effectively reduce the information loss between hash-like codes and hash codes.

Extensive experimental results showed that the DTBH method could achieve a superior performance to other state-of-the-art cross-modal image-sound retrieval methods.

For a sound query RS image task, a research team led by Prof. Dr. LU Xiaoqiang from Xi'an Institute of Optics and Precision Mechanics (XIOPM) of the Chinese Academy of Sciences (CAS) propose a new method achieved a mean average precision (mAP) of up to 60.13% on the UCM dataset, 87.49% on the Sydney dataset, and 22.72% on the RSICD dataset. For RS image query sound task, the proposed approach achieved a mAP of 64.27% on the UCM dataset, 92.45% on the Sydney dataset, and 23.46% on the RSICD dataset.

Future work will focus on how to consider the balance property of hash codes to improve image-sound retrieval performance.

The proposed framework of deep triplet-based hashing (DTBH). (Image by XIOPM)

The proposed framework of deep triplet-based hashing (DTBH). (Image by XIOPM)

(Original research article “Remote Sens” (2020) https://doi.org/10.3390/rs12010084)